AI in Finance Has a Trust Problem

April 29, 2026

By Todd McElhatton, COFO at Zuora

Most finance teams are already using AI, but the time for experimentation is over. Boards and investors now expect measurable outcomes.

In a recent Zuora survey conducted by The Harris Poll, 92% of finance and accounting teams said they are already using AI in some capacity. Yet the impact remains limited. Only 28% of finance leaders say they are seeing measurable financial results today, even as investment continues to grow.

This lines up with what I’m seeing in the market. AI is showing up in workflows, but teams are still working through how to rely on the outputs in a way that holds up under scrutiny. It’s a familiar challenge for finance: measuring and trusting outputs that aren’t fully verifiable.

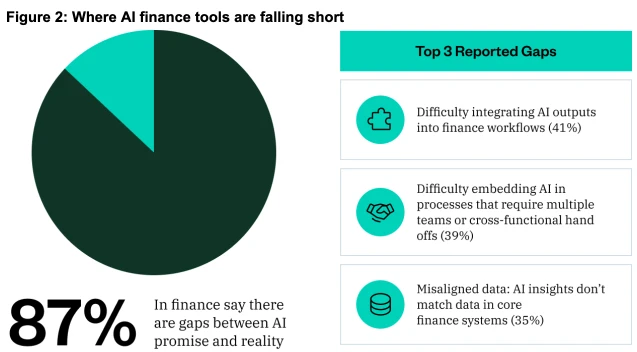

The Promise vs Reality Gap

87% of leaders say there is a gap between what AI promises and what it delivers in real financial workflows. Teams struggle to integrate AI outputs into processes like billing, revenue recognition, and the close, and often cannot explain or audit how those outputs were generated.

My take: Finance depends on traceability. Every number has to tie back to a source, move through systems, and hold up under scrutiny, especially when you’re the one signing off on it. Decisions in finance require precision, so outputs still need to be evaluated and validated.

Full Confidence in AI Isn’t There Yet

Only 44% of finance and accounting leaders say they are “Very Confident” that AI can operate within their existing controls and audit frameworks. The rest fall somewhere between partial confidence and skepticism. There are parts of a business where that level of uncertainty can be managed. In finance, it slows adoption because the accountability does not change.

Finance still owns the number, including how it was produced and how it stands up during an audit. Often, this requires a person to validate outputs, especially revenue booking.

My take: When uncertainty is high, teams default to checking the work themselves, slowing everything down and limiting the value AI can deliver.

There is also a broader concern around how AI is being used. If it replaces critical reasoning instead of supporting it, teams lose the habit of questioning outputs. Used well, AI becomes another input to evaluate.

The Trust Gap Is Structural

It’s not surprising that 91% of leaders surveyed express concerns about using AI in core financial processes such as revenue recognition or quote-to-cash. The concerns are consistent: data security, lack of oversight, and questions around data quality and reliability.

In many cases, AI is still applied outside of the system of record, especially across complex quote-to-cash processes where data spans contracts, invoices, and revenue schedules. Data is extracted, processed elsewhere, and then reintroduced, creating gaps in auditability and making it harder to trace results across the full workflow.

My take: Context is a major factor. The quality of an AI output depends on the inputs it receives. Even relatively straightforward areas like taxes require detailed context, such as where you live, dependents, deductions, and other specifics. Without that level of detail, outputs can vary significantly.

That creates a practical challenge for finance teams. How do you know if the model had the right inputs? What attributes need to be present for the output to be reliable?

Governance is becoming a key inflection point, with more focus on ensuring steps are followed and outputs are vetted before they are used.

This is also why standards around AI governance are starting to matter more. Zuora recently achieved ISO/IEC 42001 certification, reinforcing a focus on governed and accountable AI.

Where Trust Starts to Build

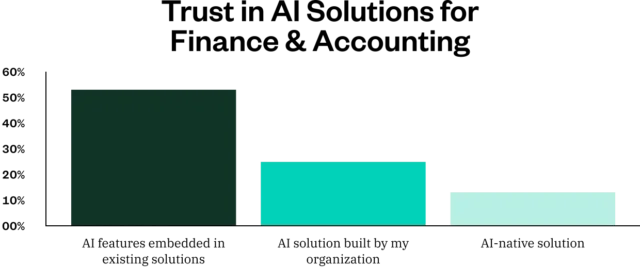

One of the more telling data points is that 53% of leaders say they would trust AI embedded within their existing systems more than standalone or internally built solutions.

That aligns with how finance already works. Trust comes from operating on complete data, within established controls, and producing outputs that can be traced back to underlying transactions. Finance systems of record reflect years of accumulated logic, compliance requirements, and edge cases. When AI operates within that foundation, it carries that context forward and reduces risk.

Trust also depends on having a clear validation process. Teams need to know what checks are required before relying on an output, whether that is a defined checklist, required attributes, or clear escalation paths. This doesn’t mean removing controls. It changes how they are applied. On our team, we’re using AI to review 100% of revenue contracts while keeping existing controls in place, increasing review thresholds as confidence builds to allow for more targeted, efficient testing by our team and full coverage with AI.

My take: AI in finance is entering a period where it has to deliver measurable outcomes. The question isn’t whether teams are using it, but whether it can deliver results that hold up under the same standards as the rest of the finance stack.

Until that happens, the gap between adoption and impact will remain. Productivity matters, but only if you can trust the output. In finance trust determines what actually gets used and what gets left on the sidelines.

Practical Conversations, On Demand

Supercharging Productivity with AI in Quote-to-Cash

AI is starting to show up in quote-to-cash in real ways, but finance is still held to a higher bar. Speed alone is not enough. The outputs have to be trusted. For teams looking to see where AI is actually delivering impact today, watch this video to learn how It’s being embedded into core workflows, what it takes to validate results, and how to increase productivity without introducing more risk or losing control.

Thanks for your response!

We'll get back to shortly.