AI in Finance: New Data Shows Big Expectations, Limited Impact, and a Critical Trust Gap

Not all AI use cases are created equal, and the bar is particularly high in finance. Unlike marketing or sales use cases, where speed and experimentation are rewarded, finance demands accuracy, compliance, and auditability, making the path to adoption and value realization far more difficult. As quote-to-cash processes grow more complex, finance teams are spending more time manually reconstructing what happened across invoices, contracts, and revenue. And while nearly all finance teams report utilizing AI in some capacity to help lighten this load, most leaders still lack deep confidence in the outputs and struggle to fully verify how results are produced.

To better understand how companies today are navigating this shift, Zuora commissioned The Harris Poll to survey more than 300 finance and accounting decision makers on their sentiment, trust levels, and concerns around AI, specifically in finance. While the results highlight strong interest and investment, there’s also real hesitation about how and where it can be trusted. Here’s what we’re seeing.

1. Decision makers are investing in AI, but financial results are lagging

As investment accelerates, so do expectations. What was once treated as experimentation is now under scrutiny from boards, investors, and executive teams demanding clear, measurable returns from AI. Finance leaders, in particular, are increasingly being asked to quantify its value, whether through efficiency gains, faster close cycles, or measurable margin improvement.

According to the data, even though 92% of finance and accounting decision makers say their finance teams are using AI tools, for most, results have not materialized:

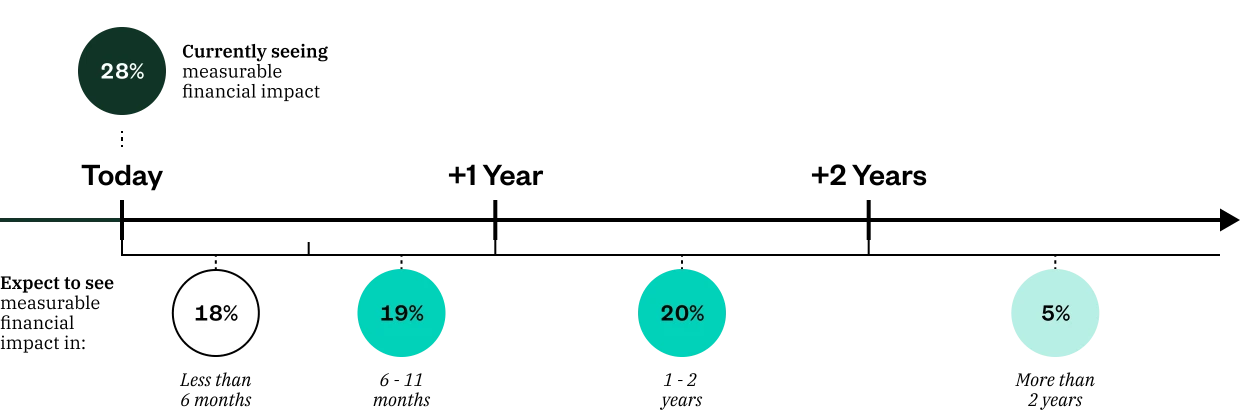

- Only 28% of finance and accounting decision makers whose companies have made investments in AI tools say they are currently seeing a measurable financial impact from their investment in AI tools

- A strong majority (67%) say they are not yet seeing measurable financial results today

- While 37% expect to see results within the next year, 25% think it will take a year or more, and 6% are unsure how to measure the financial impact

Source: The Harris Poll on behalf of Zuora from 300+ finance accounting decision makers.

This gap between investment and outcomes is even more pronounced at larger companies. Decision makers from organizations with 1,000+ employees report higher levels of AI investment (100%, compared to only 81% among those at organizations with under 100 employees), yet they are also more likely to say they haven’t seen measurable financial impact (72% among leaders at organizations with 1,000+ employees vs 48% among those at organizations with under 100 employees).

Leaders at larger organizations are less optimistic about AI’s ability to deliver impact soon: 35% of those at organizations with 1,000+ employees whose organization has made investments in AI tools say it will take a year or more to see measurable financial impact, compared to those at companies with under 100 employees whose organization has made investments in AI tools (15%).

To understand why results are lagging, it’s important to look at where decision makers say AI is falling short in real financial operations.

2. Confidence in AI exists, but not fully

A vast majority (87%) of finance and accounting decision makers say there are gaps between AI promise and reality in finance. And for many, making AI work within real financial operations is the real challenge.

Difficulty integrating AI outputs into finance workflows tops the list of gaps reported between AI promise and reality, with 41% naming it as among the biggest gaps they’ve seen. A third (33%) say the inability to audit or explain AI-driven results across systems is among the biggest gaps.

Source: The Harris Poll on behalf of Zuora from 300+ finance accounting decision makers.

3. Confidence in AI exists, but not fully

When AI falls short in practice, it creates doubt. If results are lagging, how can finance leaders actually trust AI to operate in core financial workflows? In finance, trust isn’t an abstract concept. Instead, it’s earned through precision, traceability, and control. Systems must produce outputs that can be validated, explained, and reconciled within strict audit and regulatory frameworks. If AI cannot meet that standard, it introduces risk rather than reducing it.

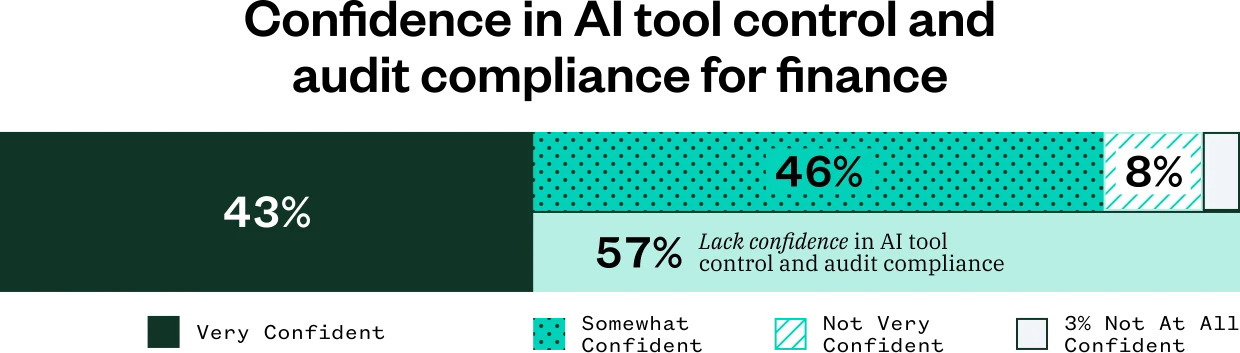

While the data shows leaders whose finance teams use AI tools have a baseline level of confidence, it hasn’t yet translated into full confidence. Only 43% say they are “very confident” that the current AI tools used by their finance team will operate within their existing financial controls and audit frameworks. That leaves a majority who range from only “somewhat confident (46%)” to “not at all /not very confident (11%).” In finance, that level of doubt doesn’t just slow adoption, it helps explain why AI investment isn’t yet translating into measurable impact.

This lack of confidence is driven by a set of specific concerns about using AI in core financial processes.

Source: The Harris Poll on behalf of Zuora from 300+ finance accounting decision makers.

4. Nearly all decision makers have concerns using AI for core financial processes

Considering the nature of the role, it’s no surprise finance and accounting leaders are cautious about applying AI to mission-critical workflows. However, the near-universal level of concern uncovered in the data suggests this hesitation signals a deeper trust gap.

Source: The Harris Poll on behalf of Zuora from 300+ finance accounting decision makers.

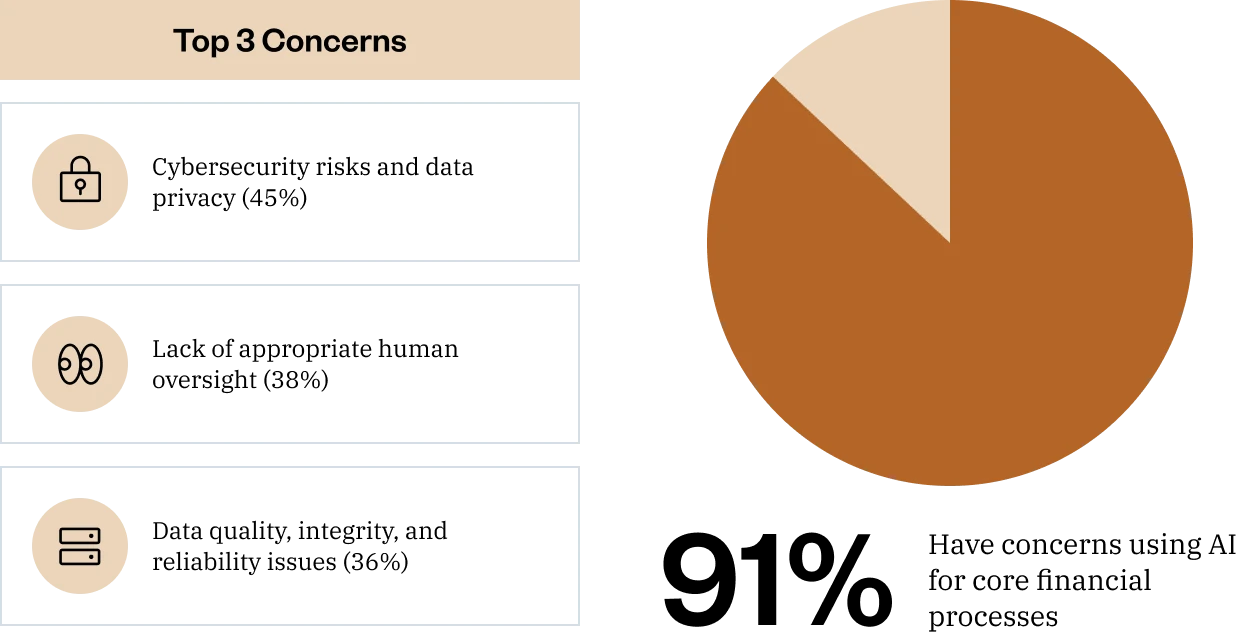

The vast majority (91%) of finance and accounting decision makers say they have concerns with using AI for core financial processes, such as revenue recognition, quote-to-cash, or financial decision-making.

Digging deeper, these concerns aren’t abstract, but rooted in specific, high-stakes risks. The top three concerns about using AI to operate core financial processes are:

- Cybersecurity and data privacy (45%)

- Lack of appropriate human oversight (38%)

- Data quality, integrity, and reliability issues (36%)

These underscore a fundamental requirement in finance: systems must operate within strict controls and deliver consistent, auditable outcomes – and AI is not immune to these stipulations.

At the core of these concerns is how AI is often implemented: AI delivered outside of the trusted finance systems creates risk.

5. How AI is delivered plays a major role in trust

Until recently, most finance teams experimenting with AI only had access to point solutions layered on top of their systems of record. Data is exported, manipulated elsewhere, and then pushed back in; creating gaps in audit trails, adding integration risk, and exposing more surface area for security and privacy issues. These tools also rarely see end-to-end quote-to-cash data or understand revenue rules, contract terms, and industry context. Given that this has been the status quo, it’s no wonder finance leaders have been slow to trust AI.

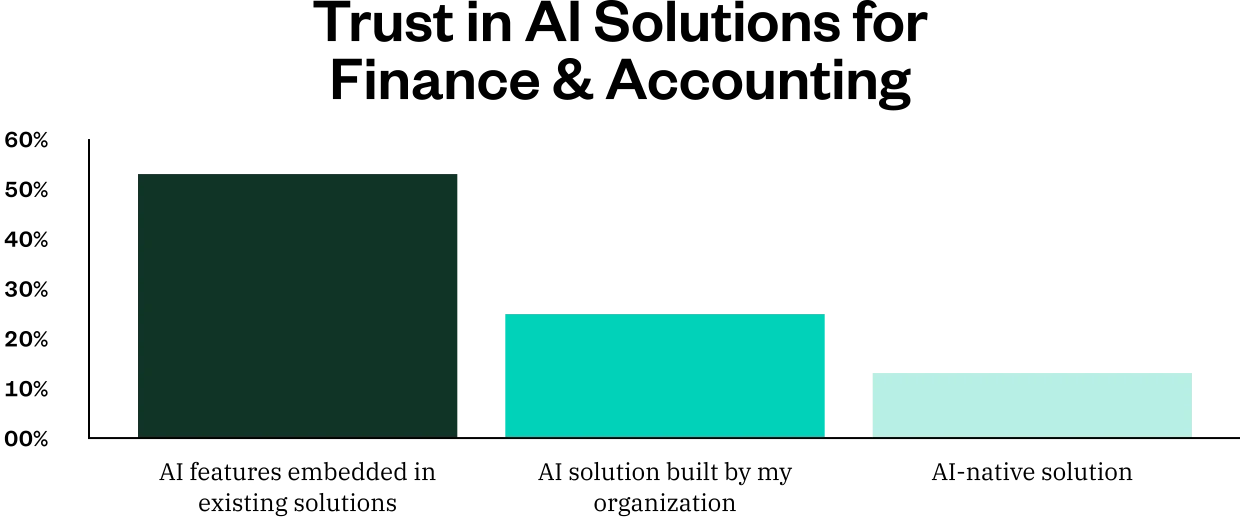

So what does “trustworthy AI” actually look like in the context of finance? The data indicates a desire for AI embedded directly into existing systems. Over half (53%) of finance and accounting decision makers say they would trust AI features embedded in existing solutions most, compared to an AI solution built by their organization (25%) or an AI-native solution (13%) when evaluating AI tools for finances.

Trustworthy AI in finance should operate on complete, real-time data from the system of record, inherit existing roles and controls, and produce explainable outputs that can be traced back to underlying transactions – all grounded in finance logic, not generic machine learning.

Source: The Harris Poll on behalf of Zuora from 300+ finance accounting decision makers.

That’s the approach behind Zuora AI. By embedding intelligence directly into critical finance processes across quote-to-cash, Zuora AI delivers insights and automation within controlled, transparent, and auditable workflows. Instead of adding another layer of complexity, it brings AI into the system of record finance already depends on.

To learn more about how to operationalize AI safely, join our April 23 webinar: “AI in Quote-to-Cash: What Finance Needs to Know” or visit zuora.com.

Methodology

This survey was conducted online within the United States by The Harris Poll on behalf of Zuora from March 18 – 25, 2026 among 321 finance/accounting decision makers (at least share decision making) ages 21 and over. The sampling precision of Harris online polls is measured by using a Bayesian credible interval. For this study, the sample data is accurate to within +/- 6.4 percentage points using a 95% confidence level. For complete survey methodology, including weighting variables and subgroup sample sizes, please contact Margaret Juhnke at mpack@zuora.com.